Engineering

Dec 2, 2025

Engineering

AAA: Agentic, Autonomous, Adaptive Intelligence - lab | up >/ conf/5 Keynote

Jeongkyu Shin

Founder / Researcher / CEO

Dec 2, 2025

Engineering

AAA: Agentic, Autonomous, Adaptive Intelligence - lab | up >/ conf/5 Keynote

Jeongkyu Shin

Founder / Researcher / CEO

This article is a summary of Jeongkyu Shin's keynote speech on September 24, 2025 at lab | up > /conf/5.

AAA: Agentic, Autonomous, Adaptive Intelligence

Lablup's 10th anniversary keynote: The next decade ahead

I want to thank everyone who attended today's conference. We at Lablup have been developing Backend.AI, an infrastructure operating system for AI, since 2015 under the motto "Make AI Accessible". This system operates like a massive operating system that treats Linux nodes as if they were processes. Many of you have returned to join us again, and I sincerely appreciate your presence.

I want to thank everyone who attended today's conference. We at Lablup have been developing Backend.AI, an infrastructure operating system for AI, since 2015 under the motto "Make AI Accessible". This system operates like a massive operating system that treats Linux nodes as if they were processes. Many of you have returned to join us again, and I sincerely appreciate your presence.

From slipstream strategy to the AI industrial revolution

Two years ago at our conference, we introduced the concept of "Slipstream Strategy" as an approach to AI. This concept refers to the effect where a car closely following a fast-moving vehicle ahead can travel farther or faster with less fuel because the leading car reduces wind resistance. In racing, this slipstream technique represents an advanced skill that reduces input costs while providing opportunities to overtake when the moment arrives. Our strategy aimed to identify and exploit weaknesses of big tech companies in situations where we could not match their capital investments. We have introduced various services and technologies through this slipstream strategy.

Two years ago at our conference, we introduced the concept of "Slipstream Strategy" as an approach to AI. This concept refers to the effect where a car closely following a fast-moving vehicle ahead can travel farther or faster with less fuel because the leading car reduces wind resistance. In racing, this slipstream technique represents an advanced skill that reduces input costs while providing opportunities to overtake when the moment arrives. Our strategy aimed to identify and exploit weaknesses of big tech companies in situations where we could not match their capital investments. We have introduced various services and technologies through this slipstream strategy.

Last year, we discussed how the age of AI exploration—when everyone rushed into AI without knowing what it contained—was ending, and the age of AI industrialization was beginning. Some had just started bringing back spices, while others had already dug canals on the other side. Some attempted to circumnavigate the globe to prove the Earth was round. Similarly, some companies build small-scale businesses or models, while others have already constructed AI pipelines generating revenue or deployed services globally. Yet another group navigates toward general intelligence, striving to create humanity's next form of intellect.

Last year, we discussed how the age of AI exploration—when everyone rushed into AI without knowing what it contained—was ending, and the age of AI industrialization was beginning. Some had just started bringing back spices, while others had already dug canals on the other side. Some attempted to circumnavigate the globe to prove the Earth was round. Similarly, some companies build small-scale businesses or models, while others have already constructed AI pipelines generating revenue or deployed services globally. Yet another group navigates toward general intelligence, striving to create humanity's next form of intellect.

We shared these observations at last year's conference. During the year that followed—a significant year in AI—changes equivalent to an industrial revolution occurred in this field. Just as the Age of Exploration influenced the Industrial Revolution, and just as the Industrial Revolution transformed the world, AI models now iterate, release, and emerge at accelerating speeds—moving from months to weeks. The participants have diversified beyond big tech companies to include numerous model developers and nations.

Ten years, and the next decade

We started our company exactly ten years and five months ago with the goal of making AI accessible to everyone. Since 2022, alongside the flow of change, we have dedicated ourselves to supporting AI operation and development across all scales—from IoT devices to hyperscale infrastructure. Last year, we spoke about making scaling, acceleration, and inference extremely easy—in short, making AI simple. We discussed this integrated enterprise solution. This year, we received requests for a big-picture overview rather than detailed explanations. Since we have limited keynote time and many speakers will share various topics today, I want to discuss the future—the next ten years—as we commemorate our tenth anniversary.

We started our company exactly ten years and five months ago with the goal of making AI accessible to everyone. Since 2022, alongside the flow of change, we have dedicated ourselves to supporting AI operation and development across all scales—from IoT devices to hyperscale infrastructure. Last year, we spoke about making scaling, acceleration, and inference extremely easy—in short, making AI simple. We discussed this integrated enterprise solution. This year, we received requests for a big-picture overview rather than detailed explanations. Since we have limited keynote time and many speakers will share various topics today, I want to discuss the future—the next ten years—as we commemorate our tenth anniversary.

These ideas may sound somewhat abstract. However, when we talked about what we do now ten years ago at our founding, most feedback we received suggested it sounded like science fiction or imagination. When we said that as deep learning develops, machines could replace not only knowledge but also human intellectual and creative activities, many people laughed. With that same spirit, I want to share what we plan to do over the next ten years.

These ideas may sound somewhat abstract. However, when we talked about what we do now ten years ago at our founding, most feedback we received suggested it sounded like science fiction or imagination. When we said that as deep learning develops, machines could replace not only knowledge but also human intellectual and creative activities, many people laughed. With that same spirit, I want to share what we plan to do over the next ten years.

I remember the slides from 2016, when we traveled around introducing our company after founding it in 2015. Our first open-source version launched in November that year under the name "Sorna." Tremendous changes have occurred since then.

I remember the slides from 2016, when we traveled around introducing our company after founding it in 2015. Our first open-source version launched in November that year under the name "Sorna." Tremendous changes have occurred since then.

Five millennia of delegation and three substitutions

Before examining the past ten years and the next decade, let us briefly look back at the past five millennia. Humanity has substituted certain things over the past five thousand years and has continued substituting others through technological development over the past two hundred years. Humanity has continuously pursued delegation. When we consider why technology develops, humans develop technology to continuously delegate what they do. In a sense, the fundamental human motivation is "wanting to remain still—wanting to remain endlessly still." Ironically, however, as we strive to remain still, we live in an era where working hours per week continue to increase. When we lived hanging from trees, we worked less than 20 hours per day. Now hours keep increasing, and with AI, they will likely increase further.

Before examining the past ten years and the next decade, let us briefly look back at the past five millennia. Humanity has substituted certain things over the past five thousand years and has continued substituting others through technological development over the past two hundred years. Humanity has continuously pursued delegation. When we consider why technology develops, humans develop technology to continuously delegate what they do. In a sense, the fundamental human motivation is "wanting to remain still—wanting to remain endlessly still." Ironically, however, as we strive to remain still, we live in an era where working hours per week continue to increase. When we lived hanging from trees, we worked less than 20 hours per day. Now hours keep increasing, and with AI, they will likely increase further.

Nevertheless, nearly all technology until now has focused on replacing human physical labor. As you well know, changes over the past 50 years have replaced human knowledge work. While engines and machines previously replaced physical labor, computers and computation have recently replaced human knowledge work. Everyone recognizes computers as the representative example, and at some point, we began searching for materials and conducting research on the internet instead of in libraries. We then gained the ability to analyze millions of records through Excel or databases instead of calculating everything by hand or using an abacus.

Nevertheless, nearly all technology until now has focused on replacing human physical labor. As you well know, changes over the past 50 years have replaced human knowledge work. While engines and machines previously replaced physical labor, computers and computation have recently replaced human knowledge work. Everyone recognizes computers as the representative example, and at some point, we began searching for materials and conducting research on the internet instead of in libraries. We then gained the ability to analyze millions of records through Excel or databases instead of calculating everything by hand or using an abacus.

The development over the past five years represents the third substitution. While we have substituted physical activities and then knowledge activities, we now live in an era where human creative activities face replacement. Deep learning-based generative models gained significant attention starting in 2022. Over five years from GPT-3's emergence in 2020 until now, we discovered—not invented, but discovered—generative AI, and through extremely rapid iteration, we have experienced that human creative activities can be replaced to some degree.

The development over the past five years represents the third substitution. While we have substituted physical activities and then knowledge activities, we now live in an era where human creative activities face replacement. Deep learning-based generative models gained significant attention starting in 2022. Over five years from GPT-3's emergence in 2020 until now, we discovered—not invented, but discovered—generative AI, and through extremely rapid iteration, we have experienced that human creative activities can be replaced to some degree.

Measurement enables replacement

How does this become possible? When we examine these changes—the three types, with this being the third—and think carefully about what lies behind the first two changes and this third one, we might realize that this becomes possible because we can measure and evaluate systems.

How does this become possible? When we examine these changes—the three types, with this being the third—and think carefully about what lies behind the first two changes and this third one, we might realize that this becomes possible because we can measure and evaluate systems.

What does this mean? Let us think carefully. We have always quantified things in some way. Before mass production, we must clearly know what we need, establish standards, and create measurable systems to standardize. Therefore, we made everything measurable. Consequently, unit systems played crucial roles even in the civilization stages before industrialization.

Horsepower: Quantifying physical labor

Over those thousands of years of history, we roughly quantified the physical labor that humans or animals performed using a figure called horsepower. When you think carefully, does horsepower as a unit make sense? It refers to the work one horse can do, but this horse is a racehorse and that horse is a regular horse. Which horse should serve as the standard? Or this horse carries cargo and that horse is just for riding. Horses actually vary tremendously.

Over those thousands of years of history, we roughly quantified the physical labor that humans or animals performed using a figure called horsepower. When you think carefully, does horsepower as a unit make sense? It refers to the work one horse can do, but this horse is a racehorse and that horse is a regular horse. Which horse should serve as the standard? Or this horse carries cargo and that horse is just for riding. Horses actually vary tremendously.

Yet at some point, we simply lumped those horses together and created the unit of one horsepower. By creating this unit of one horsepower, we transformed the previously unquantifiable ability of a species called horses into a single figure. We then gained the ability to express how many horsepower a car has. When you think about it, this seems quite strange.

Shall we look at a representative example? How much horsepower does an airplane have? Airplanes naturally have horsepower. They have horsepower like 320,000. That means, for example, 300,000 horses. But horses cannot fly in the sky. In a sense, we have physical quantities, we establish one standard for those physical quantities, we determine one average capability for that standard—the horse—and then we quantify. Therefore, we can speak of even this airplane flapping through the sky using quantified figures.

Shall we look at a representative example? How much horsepower does an airplane have? Airplanes naturally have horsepower. They have horsepower like 320,000. That means, for example, 300,000 horses. But horses cannot fly in the sky. In a sense, we have physical quantities, we establish one standard for those physical quantities, we determine one average capability for that standard—the horse—and then we quantify. Therefore, we can speak of even this airplane flapping through the sky using quantified figures.

Thus, within about 100 years, modern automobiles gained propulsive force approaching one thousand horses. Some burn oil and others use electricity. When electricity is used, we employ the unit watts. The force quantified using units like watts has exceeded human physical labor to make the impossible possible. The result is the computation we use today.

Thus, within about 100 years, modern automobiles gained propulsive force approaching one thousand horses. Some burn oil and others use electricity. When electricity is used, we employ the unit watts. The force quantified using units like watts has exceeded human physical labor to make the impossible possible. The result is the computation we use today.

Man-Months: Quantifying mental labor

How can we quantify mental labor? Though not unique to Korea, we frequently use a term here called man-months. When you think about it, this seems quite funny. Does one person's intellectual activity over one month seem possible? When you look around you?

How can we quantify mental labor? Though not unique to Korea, we frequently use a term here called man-months. When you think about it, this seems quite funny. Does one person's intellectual activity over one month seem possible? When you look around you?

Yet at some point, we quantify measurement targets that could be so subjective and have tremendous variation. The quantifications that succeed become definitions, and from the moment we quantify sufficiently measurable indicators, standardization becomes possible. Following that standardization, scaling up then becomes possible.

Quantifying creative activity: The next stage

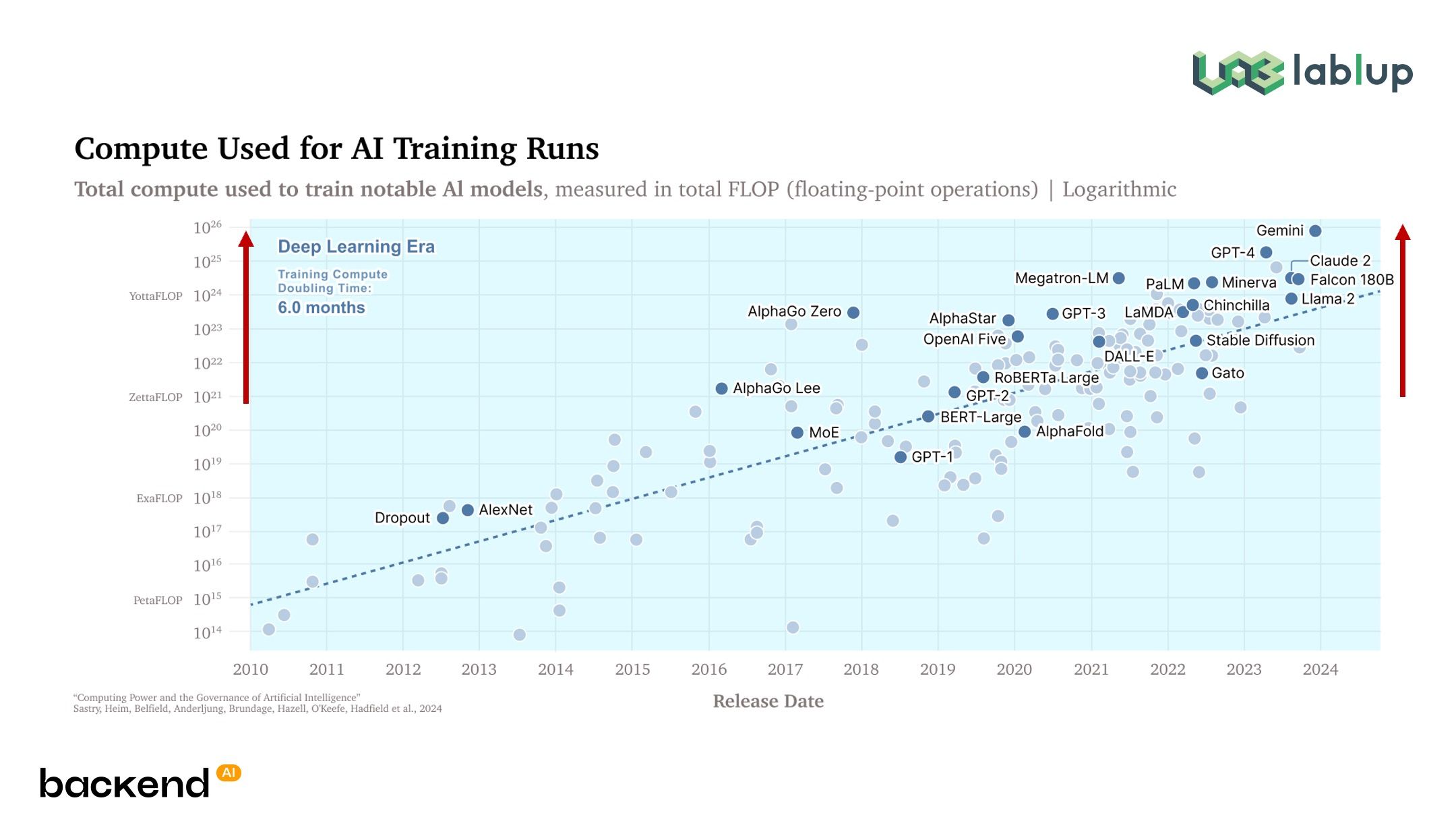

Electricity and silicon currently attempt to replace humanity's third type of labor, which we can view as creation. Over the past five years, we have become able to train models by inputting computation exceeding 10,000 times previous amounts. As a result of creating models this way, we have produced machines capable of creation to some degree—not perfectly tremendous, but generally adequate. To some eyes, this may seem like "still far off, with much road ahead," but that does not matter so much. It is just like one horse. We have created a unit of quantifiable creative activity.

Electricity and silicon currently attempt to replace humanity's third type of labor, which we can view as creation. Over the past five years, we have become able to train models by inputting computation exceeding 10,000 times previous amounts. As a result of creating models this way, we have produced machines capable of creation to some degree—not perfectly tremendous, but generally adequate. To some eyes, this may seem like "still far off, with much road ahead," but that does not matter so much. It is just like one horse. We have created a unit of quantifiable creative activity.

What should we use to measure this substituted labor(which is substituted creative activity) from the past five years? We need a new indicator to quantify the labor substitution value that development over the past five years has brought, but this will be difficult to measure. How will we define the unit of creation? How will we quantify the capability of such automated creative labor that will increase tremendously going forward? Naturally, we will need frameworks for this quantification. Through such frameworks and new indicators, machines will produce outputs, and we must determine how to quantify such substitutions where machines replace portions of human creativity—this represents the start of our contemplation.

Intelligence demand: A new unit

If we quantified intellectual labor by time, after we can tool-ize creative activity in some way, we will be able to quantify something called intelligence demand, just as we calculate electrical power. Probably soon. Just like horsepower and electrical power, a figure called intelligence demand will emerge.

If we quantified intellectual labor by time, after we can tool-ize creative activity in some way, we will be able to quantify something called intelligence demand, just as we calculate electrical power. Probably soon. Just like horsepower and electrical power, a figure called intelligence demand will emerge.

This may sound somewhat novel, but we know this quite generally. We take exams. We take the CSAT. We consider academic backgrounds and conduct interviews or review careers. These actually serve as indirect indicators of such intelligence demand, in a sense. Probably some potential intelligence demand and intelligence consumption exist for creating certain startups or ventures. We simply cannot currently quantify this, so we currently satisfy this intelligence demand by working together with good people.

Lablup's next decade: Supplying intelligence

When we contemplated what we will do over the next ten years, our answer was that we could open our future by transforming into a company that quantitatively supplies intelligence demand. With the AI engineering era as intelligence rapidly increases beyond an inflection point, after Make AI Accessible and Make AI Scalable, what we should probably do next is quantify how much various types of intelligence stages require where they need it, and become a company that supplies those quantified resources at low prices.

When we contemplated what we will do over the next ten years, our answer was that we could open our future by transforming into a company that quantitatively supplies intelligence demand. With the AI engineering era as intelligence rapidly increases beyond an inflection point, after Make AI Accessible and Make AI Scalable, what we should probably do next is quantify how much various types of intelligence stages require where they need it, and become a company that supplies those quantified resources at low prices.

Therefore, we aim to transform into a company that supplies intelligence over the next ten years. This sounds very abstract. People who heard our company's explanation ten years ago probably thought similarly. We plan to make our goal for the next ten years to create solutions that provide specific levels of intelligence demand regardless of form and scale, work together with numerous partners necessary to achieve such intelligence demand, and create platforms that pipeline this.

To accomplish this, we will understand the performance and characteristics of extremely diverse hardware semiconductors faster than anyone, enable quantitative measurement of intelligence quantities required or newly created there, and simultaneously define what kind of intelligence should be quantified at the stage where supply and need meet through much more dialogue with many development companies and our current many customers and user base. We aim to help and participate in all scaling up that can occur at that stage as our company's next goal.

New Platforms: PALI, Continuum, AI:DOL

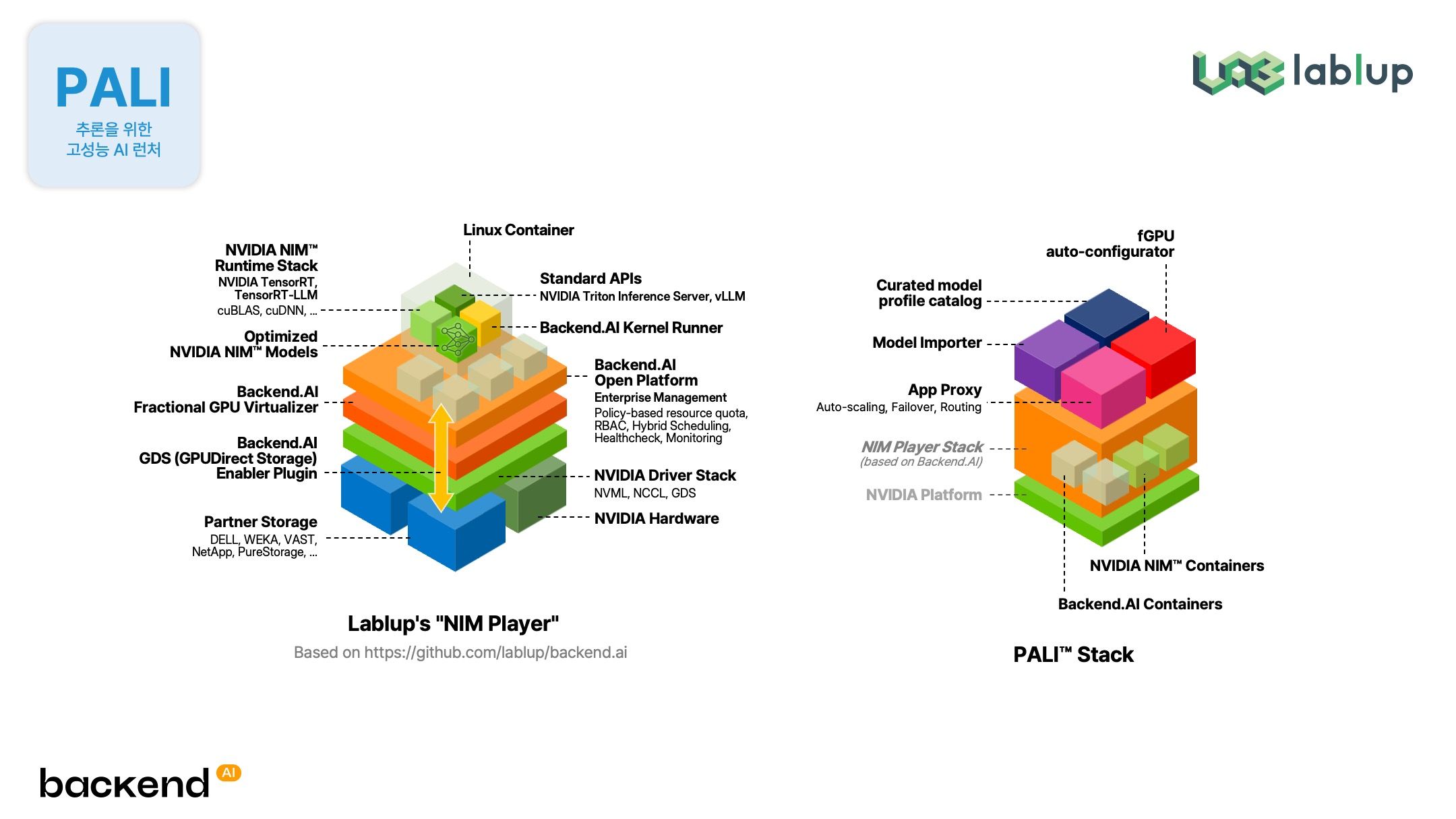

In 2024, we released three platforms, and we expressed at that time that projects waiting to deploy in the warehouse existed. We actually developed PALI (Performant AI Launcher for Inference), which achieves flexibility and performance by combining virtualization with TensorRT-LLM, vLLM, and SGLang. We also strengthened the fine-tuning combination of PALI and FastTrack to create and provide a service called PALANG.

In 2024, we released three platforms, and we expressed at that time that projects waiting to deploy in the warehouse existed. We actually developed PALI (Performant AI Launcher for Inference), which achieves flexibility and performance by combining virtualization with TensorRT-LLM, vLLM, and SGLang. We also strengthened the fine-tuning combination of PALI and FastTrack to create and provide a service called PALANG.

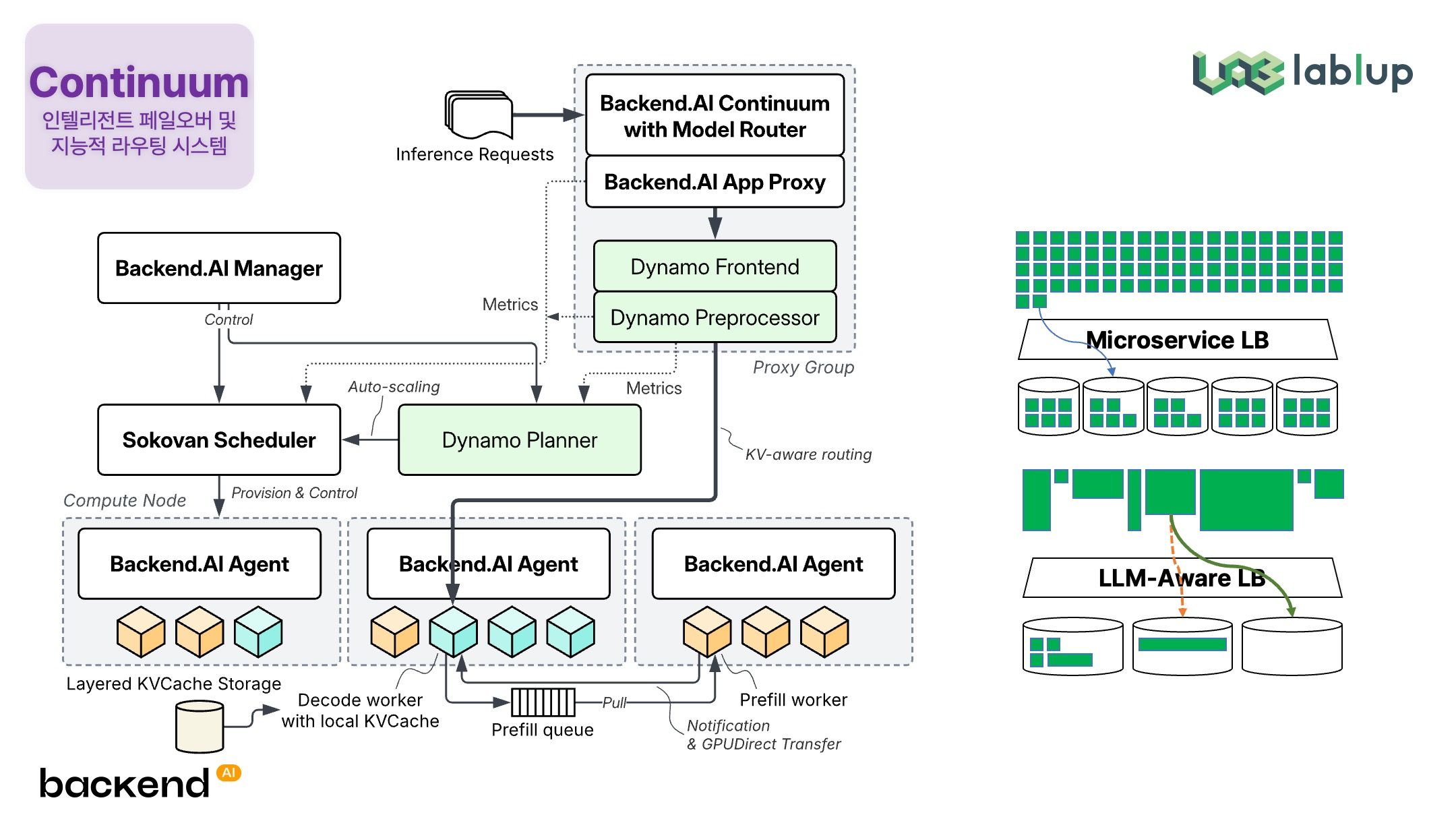

Over one year, a new tool called Continuum was added to that list. This represents an adaptive failover system that mixes cloud and on-premise, and simultaneously a model routing system. Rather than simply routing model APIs, its characteristic is operating as a gateway for distributed serving by combining with PALI. For example, it decomposes the prefill part that fills data and the decode part that generates data into different semiconductors or different farms, and interconnects them through ultra-high-speed KV cache, prefill cache, context cache, and all such processes by combining with Dynamo and llm-d to enable provision through the Continuum router. Continuum becomes one core technology for this, newly added as a system for controlling large-scale distributed systems.

Over one year, a new tool called Continuum was added to that list. This represents an adaptive failover system that mixes cloud and on-premise, and simultaneously a model routing system. Rather than simply routing model APIs, its characteristic is operating as a gateway for distributed serving by combining with PALI. For example, it decomposes the prefill part that fills data and the decode part that generates data into different semiconductors or different farms, and interconnects them through ultra-high-speed KV cache, prefill cache, context cache, and all such processes by combining with Dynamo and llm-d to enable provision through the Continuum router. Continuum becomes one core technology for this, newly added as a system for controlling large-scale distributed systems.

The second item added this year is AI:DOL, which another session will cover in detail. We originally drew hints from the stones in Baduk and also from Lee Sedol's "dol," and it originally stands for Deployable Omnimedia Lab. We attached AI in front because we use the lablup.ai domain, so we called it Lablup AI DOL, but people kept reading it as "idol," so we just decided on AI:DOL.

The second item added this year is AI:DOL, which another session will cover in detail. We originally drew hints from the stones in Baduk and also from Lee Sedol's "dol," and it originally stands for Deployable Omnimedia Lab. We attached AI in front because we use the lablup.ai domain, so we called it Lablup AI DOL, but people kept reading it as "idol," so we just decided on AI:DOL.

This represents an advanced authoring tool that replaces the existing 'Talkativot' system to not simply chat but generate and utilize various media or manage generated media. A demo is prepared outside, so please use it extensively.

Why build directly: The value of tight integration

Such tools actually exist widely as open source. Open WebUI and similar tools exist, so why do we build directly? We believe that for intelligence quantification and optimization, the service layer and infrastructure layer must integrate tightly. The general approach creates various layers separately, combines them, and makes products and services, but we see that domains of convenience and optimization exist that can only be reached when each interacts closely. Apple says the same thing.

Such tools actually exist widely as open source. Open WebUI and similar tools exist, so why do we build directly? We believe that for intelligence quantification and optimization, the service layer and infrastructure layer must integrate tightly. The general approach creates various layers separately, combines them, and makes products and services, but we see that domains of convenience and optimization exist that can only be reached when each interacts closely. Apple says the same thing.

In DOL's case, tight integration becomes necessary for directly transmitting requests to PALI, conversely for PALI to grasp DOL's state and prepare serving in advance, or for PALANG to automatically schedule fine-tuning without affecting users' current language model usage at all.

Hardening project and Backend.AI 25.14

Various projects we announced last year have now officially launched, and some have version upgrades. The two projects we introduced today and the backend.ai doctor project we did not introduce represent the first cases applying an internal project called the hardening project to add stability and scalability to existing backend.ai. Together with version 25.14, the first version applying this, and backend.ai doctor, an automatic diagnosis and recovery function solution we did not separately introduce today—we have prepared various footholds for scaling up to the next stage.

Various projects we announced last year have now officially launched, and some have version upgrades. The two projects we introduced today and the backend.ai doctor project we did not introduce represent the first cases applying an internal project called the hardening project to add stability and scalability to existing backend.ai. Together with version 25.14, the first version applying this, and backend.ai doctor, an automatic diagnosis and recovery function solution we did not separately introduce today—we have prepared various footholds for scaling up to the next stage.

Two years later, and ten years later

While preparing these things, we thought extensively about the past ten years and the next ten years. Ten years later sounds very far away—I mentioned intelligence quantification, but this represents a very distant story. However, we clearly know two years ahead. In two years, the methods for supplying intelligence demand will change significantly.

While preparing these things, we thought extensively about the past ten years and the next ten years. Ten years later sounds very far away—I mentioned intelligence quantification, but this represents a very distant story. However, we clearly know two years ahead. In two years, the methods for supplying intelligence demand will change significantly.

For example, the capability of GPT-3.5-based ChatGPT that amazed us enormously at the end of 2022 will soon be fully implemented at smartphone scale within one or two years. The scale of data business, and the next scale, will probably continue to follow. Composable clusters and smaller-stage infrastructures will emerge, and deployable units based on NPUs will also emerge. On the larger side, toward hyperscalers, the scale continues rising above 100,000 units, but simultaneously will continue expanding downward as well.

In this era of extremely diversifying scales and platforms, we aim to open a new ten years ahead as a company that develops intelligence, supplies intelligence, and quantifies intelligence. We hope you will watch Lablup's challenges closely.

Closing

At today's conference, we have invited many of you who contemplate very similar concerns in the AI field. You will encounter them across infrastructure, modeling, research, and applications in three session tracks today. We hope you hear extremely diverse happenings and new experiences occurring at these frontlines, gather experiences through extensive dialogue with those sitting beside you through such opportunities, and if something new can be born through your various conversations in this place, we would be very happy even if it bears no direct connection to us.

At today's conference, we have invited many of you who contemplate very similar concerns in the AI field. You will encounter them across infrastructure, modeling, research, and applications in three session tracks today. We hope you hear extremely diverse happenings and new experiences occurring at these frontlines, gather experiences through extensive dialogue with those sitting beside you through such opportunities, and if something new can be born through your various conversations in this place, we would be very happy even if it bears no direct connection to us.

Welcome again, and thank you for coming.

Please fully enjoy Lablup's frontline and AI's frontline at today's conference. Thank you.