- Enterprise FeatureR2

End-to-End MLOps Platform for Enterprise AI/ML Operations

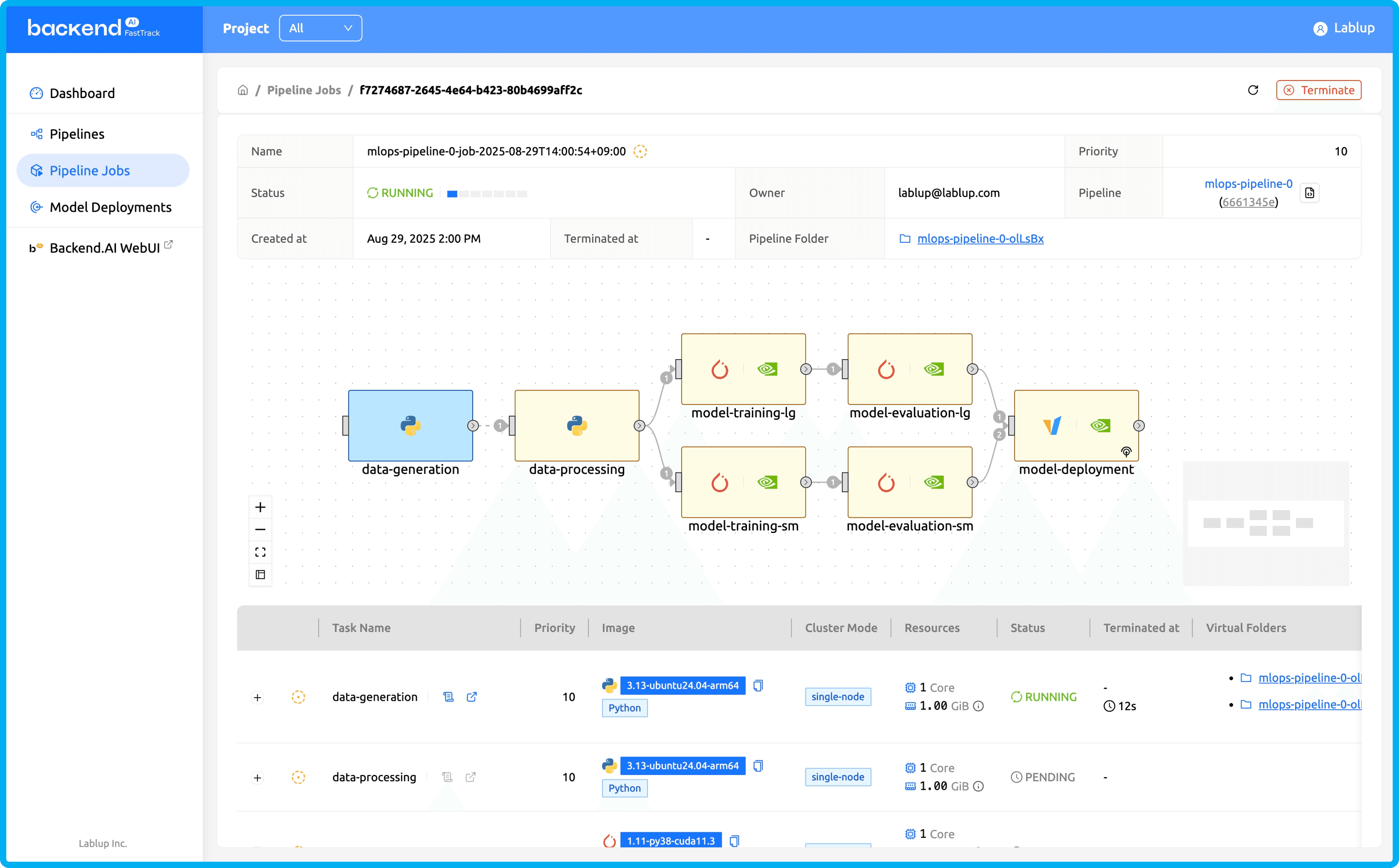

Backend.AI FastTrack 3 is an MLOps pipeline platform that defines and executes AI/ML workflows as DAGs (Directed Acyclic Graphs) on Backend.AI clusters. It unifies end-to-end pipelines from data prep to deployment and monitoring, optimizing GPUs / AI accelerators for faster delivery and maximum infrastructure ROI.

Backend.AI FastTrack 3: Simplifying Complex AI/ML Development Pipelines

The perfect resource for every task

AI workload-specialized Sokovan™ orchestrator efficiently allocate and manage different scales of computing resources for each task.

Pipeline operations from data prep to deployment

Experience complete end-to-end MLOps platform that manages data preprocessing, training, validation, and model serving as one connected pipeline.

Designed for everyone GUI & CLI

Beginners can build pipelines through drag-and-drop, experienced developers leverage CLI for granular control to boost productivity.

One pipeline from data Prep to deployment

Experience an end-to-end MLOps platform connecting data preprocessing, training, validation, and model serving. Backend.AI FastTrack 3 unifies every machine learning pipeline stage. Prepare data, train models, validate performance, and deploy via REST API, all in one platform without stitching multiple tools. Leave the complexity to Backend.AI FastTrack 3.

Optimized resource allocation for all workloads

Backend.AI's flexible resource management and optimized scheduling power Backend.AI FastTrack 3's robust workflow execution. Efficient pipeline runs become standard with Backend.AI FastTrack 3.

Fast-track your AI Development to the next level

- Easy batch job pipeline construction

- Automated multi-node configuration for large model training

- Flexible execution environment setup and resource allocation per task

- Project-based internal deployment & team-specific sharing

- Secure external tunneling support for model serving

- Highly reusable pipeline templates