News

News about Lablup Inc and Backend.AI

Feb 6, 2026

News

Behind Lablup x Upstage's Phase 1 Win for the Sovereign AI Foundation Model

Lablup

Lablup

Feb 6, 2026

News

Behind Lablup x Upstage's Phase 1 Win for the Sovereign AI Foundation Model

Lablup

Lablup

In January 2026, the Upstage consortium that Lablup is part of successfully passed the Phase 1 evaluation for the Korean government's Sovereign AI Foundation Model project. This initiative aims to protect national AI sovereignty by having the government provide support for GPUs, data, and talent development, while the private sector actively leverages these resources to develop frontier AI models. The goal was to select competitive companies and provide concentrated GPU support rather than distributing resources thinly, enabling the development of truly excellent models. Through this, the government aims to leap into becoming one of the top three AI powerhouses globally while creating mutually beneficial outcomes with industry partners.

Out of 15 consortiums, five were selected as K-AI teams, and after the Phase 1 evaluation, three teams including the Upstage consortium remained. This achievement is particularly meaningful as it was accomplished by the only consortium composed entirely of startups. We sat down with Seungyoun Yi, the consortium lead, Sehwan Joo, AI Research Engineer from Upstage, and Eunjin Hwang, Senior Researcher, and Kyujin Cho, Software Engineer from Lablup, the infrastructure partner, to hear about their journey over the past three months.

1. Understanding the Consortium Structure

Unlike other consortiums participating in the Sovereign AI Foundation Model project, the Upstage consortium is composed of startups. Within the consortium, Lablup handles GPU infrastructure management software, Flitto manages data preprocessing, and Nota takes charge of model compression and optimization, playing core roles in model development. MakinaRocks, Korea Financial Telecommunications & Clearings Institute, Law&Company, VUNO, Day1Company, Orchestro, and Allganize are working on domain-specific model development and service expansion strategies. For industry-academia collaboration, Sogang University and KAIST are participating to share expertise in model design and training.

In preparation for Phase 2, Channel Corporation, FINDA, RLWRLD, HyperAccel, and KETI (Korea Electronics Technology Institute) joined as expansion partners, while Professor Yejin Choi from Stanford University and Professor Kyunghyun Cho from New York University joined as academic partners. We asked about what differentiates the Upstage consortium from others.

Q. What was the rationale behind forming a consortium of startups?

Seungyoun (Upstage) | Global technology trends are changing rapidly, and we believed that agile startups working together would be the fastest way to keep pace. Experience is crucial for startups, but compared to large corporations, it's difficult for startups to gain experience at large scale with significant funding. We see our ability to move faster than any other company while building our own technology stack as a survival strategy for startups and an area where we can outperform large enterprises. That's why we aimed to maximize startup capabilities through the Sovereign AI Foundation Model project.

Q. How did Lablup come to participate in this project?

Eunjin (Lablup) | After the RFP for the Sovereign AI Foundation Model project was released, our CEO received inquiries from various organizations. We decided that Upstage's philosophy toward this project aligned best with ours. The government supports consortiums under the condition that they develop and publicly release sovereign foundation models and data, but some teams weren't planning to release their models. Upstage showed a strong commitment to releasing models and strengthening the ecosystem itself. This matched well with our company's 'open-source philosophy' and direction, so we decided to join forces.

2. Four Months Crossing Model and Infrastructure: The Journey of Upstage and Lablup

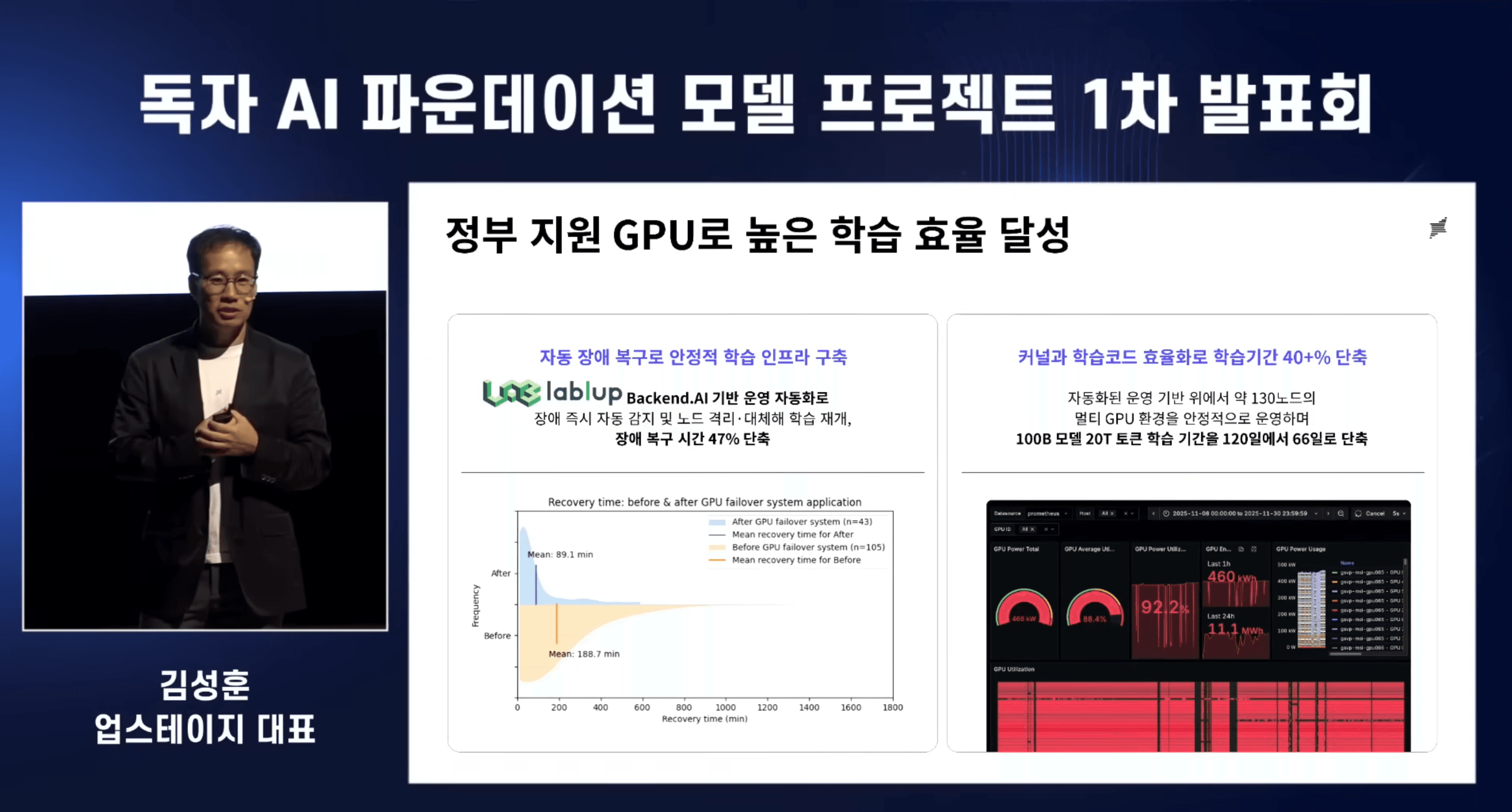

At the Phase 1 presentation of the Sovereign Foundation Model project, Upstage CEO Sung Kim revealed that the consortium achieved high pre-training efficiency using government-supported GPUs. Through Backend.AI-based operation automation by infrastructure partner Lablup, failure recovery time was reduced by 47%. Building on these improvements, along with Upstage's kernel and pre-training code optimization, they achieved a 40% reduction in training time—completing 20 trillion token pre-training in 66 days instead of the projected 120 days. We asked about the technical foundation that enabled these achievements.

2-1. About the Solar Open 100B Model

Q. Can you explain the characteristics of the Solar Open model?

Sehwan (Upstage) | Solar Open is a Mixture of Experts (MoE) model with a total of 102B parameters. The MoE architecture has multiple expert models, activating only the necessary experts depending on the situation. So during inference, only about 12B parameters are activated, making it relatively efficient computationally. Compared to GPT-4o and similar-scale models, Solar Open's performance is competitive, and its distinguishing feature is superior Korean language capability.

Seungyoun (Upstage) | Since we primarily serve enterprise customers, they cannot ignore cost efficiency. If we built a 100B dense model, not many companies could actually use it. With about 10B activated parameters during inference, we can achieve fast inference speed while fully leveraging the capabilities of a 100B-scale model, which is why we determined this model size. In a sense, it was about how to efficiently serve a high-performance model—a perspective aligned with startup philosophy.

Q. How did you obtain training data? How did you address the Korean language data shortage?

Seungyoun (Upstage) | Upstage invested significant effort, and consortium partners helped as well. Flitto processed and refined the data we collected, and we received substantial data from the government. Beyond Flitto, service expansion partners specialized in various domains—for example, LawNCompany provided legal and financial domain data and benchmarks.

Sehwan (Upstage) | For insufficient data, we built our own synthetic data generation pipeline. Synthesizing data is faster and more efficient than direct collection. While the synthesis process produces some low-quality data, we filtered those out or validated them through experiments before use. We focused on optimizing how much high-quality data to inject at each training stage through experimental results, and since we have a relatively smaller model, we concentrated on training with as much data as possible compared to other consortiums.

Seungyoun (Upstage) | Quality control was absolutely critical. Typically, if we collect 100% data, less than 1% actually gets used for training. Filtering through multiple stages to generate only high-quality data is our most important goal—that's how small-scale models can achieve good performance.

2-2. The Solid Foundation Enabling Model Training: Infrastructure

Q. Infrastructure setup and optimization for model training must have been crucial.

Kyujin (Lablup) | The Sovereign Foundation Model project has a structure where GPU support scales based on how much companies open their models and data. The Upstage-Lablup consortium decided to release models as open source, securing conditions for maximum GPU support from the government. Lablup installed our AI infrastructure operation platform, Backend.AI, on the GPU cluster provided by the government, enabling Upstage to use the cluster at maximum throughput while minimizing training time loss during model training.

Eunjin (Lablup) | Some consortium partners use GPUs for model compression or optimization work, requiring resource partitioning. We support making this easy, and during Phase 1 evaluation, we not only facilitated the model training process but also created a structure where load balancing and scaling for evaluators to test the model all ran through Backend.AI model services.

Q. What GPU equipment did you operate?

Kyujin (Lablup) | We used NVIDIA B200 GPUs located in SK Telecom's latest GPU cluster 'Haein.' The B200 is a high-performance chip based on NVIDIA's Blackwell architecture with exceptional capabilities. Our consortium received and used a total of 504 GPUs. During training, we discovered that when using all 63 nodes, the time loss from a single failure was quite significant. We learned that a training strategy keeping 2-3 GPUs as backups could ultimately achieve higher training efficiency. So we ultimately deployed 480 GPUs for pre-training and used the remaining 24 GPUs for lower-priority tasks, but kept them ready for immediate deployment if issues arose with the 480.

Q. Were there any unexpected failures or bottlenecks during training?

Kyujin (Lablup) | There were roughly two main challenges. First was optimizing the distributed storage we received to synergize with the consortium's training strategy. High-bandwidth distributed storage for large-scale training has various models showing different performance characteristics. We worked closely with SK Telecom (GPU infrastructure provider), the storage provider, Upstage, and Lablup to ensure these design philosophies didn't create friction with the consortium's pre-training strategy.

During actual training, since we were training models from scratch, the massive scale of equipment presented various types of problems we'd never encountered before. For example, training jobs would stop without warning due to latency issues that emerged as equipment scale increased. Rather than being anyone's fault, these were problems we all encountered walking a path none of us had traveled before. Looking back, these were unavoidable problems when training on such large-scale GPU infrastructure, and whether domestic or international, very few places document these large-scale GPU infrastructure training experiences in detail.

Q. If GPU failures are unavoidable, quick recovery seems crucial.

Kyujin (Lablup) | In large-scale model training, multiple GPU servers are actually bundled together like one giant chip. That means if even one fails, the entire system can become idle. So minimizing downtime and recovering quickly when failures occur is essential.

Sehwan (Upstage) | From a model training perspective, GPU failures are critical. If a GPU fails and training stops, even after restarting, you have to roll back to the last checkpoint and retrain that period, so actual time loss is even longer. Checkpoints are points where intermediate training states are saved—we kept these intervals as short as possible and carefully coordinated checkpoint timing to enable quick training resumption.

Q. The process of detecting and recovering from failures must not be easy.

Kyujin (Lablup) | Correct. There's a famous saying: 'Machines don't lie.' But in reality, we found that machines not only don't lie—they often don't speak up even when they're in pain. Fundamentally, GPUs, servers, and storage continuously output various metrics like temperature, utilization, and memory usage in real-time. We can monitor in fine detail—down to the temperature and utilization of individual microchips inside GPUs. However, certain types of failures are difficult to detect numerically. In large-scale AI training environments, even small failures can lead to major bottlenecks, so simply looking at individual metrics isn't enough. We focused on combining individual metrics or analyzing patterns to discover hidden failures.

Once we detect failures, we immediately remove the problematic equipment from the work pool and deploy replacement equipment. We built a system that automatically redistributes training tasks from the interruption point once replacement equipment is operational. We worked hard to ensure Upstage colleagues could rest peacefully during the Chuseok holiday, and looking at the results, it seems to have worked well.

Eunjin (Lablup) | Actually, there was an incident during the Chuseok holiday where the system stopped and automatically restarted, and nobody even knew about it. (laughs)

Q. I heard Lablup utilized a system monitoring tool called all-smi in this process.

Kyujin (Lablup) | The commonly used tool for collecting various metrics from NVIDIA GPUs is DCGM (NVIDIA Data Center GPU Manager). However, for monitoring the Sovereign Foundation Model cluster, Lablup's self-developed solution called all-smi was used. all-smi is a tool that displays various metrics from not only NVIDIA GPUs but also AMD and Intel XPUs, as well as NPUs from manufacturers like Furiosa and Rebellions, through a unified interface. This integration allows us to build a monitoring system that isn't locked into a specific platform and can flexibly respond to future expansions of various domestic accelerator clusters.

Q. Beyond infrastructure optimization supported by Lablup, I heard Upstage also conducted efficiency improvements.

Sehwan (Upstage) | We achieved significant training efficiency improvements through PyTorch's compilation features and checkpoint optimization. Particularly in large-scale model training, how you distribute model parameters across hundreds of GPUs is key. We adopted a hybrid sharding approach.

Fully sharded data parallel (FSDP) distributes model parameters across all GPUs, showing high memory efficiency, but high inter-GPU communication volume causes slowdowns in large-scale clusters. Hybrid sharding, on the other hand, applies full sharding within nodes and data parallelism between nodes, reducing communication overhead while maintaining memory efficiency.

When we applied hybrid sharding to our 480 GPUs, we significantly reduced communication bottlenecks compared to full sharding and improved overall training speed. These optimization techniques combined to noticeably improve training throughput over previous approaches.

3. Model Training Experience with Lablup's Backend.AI

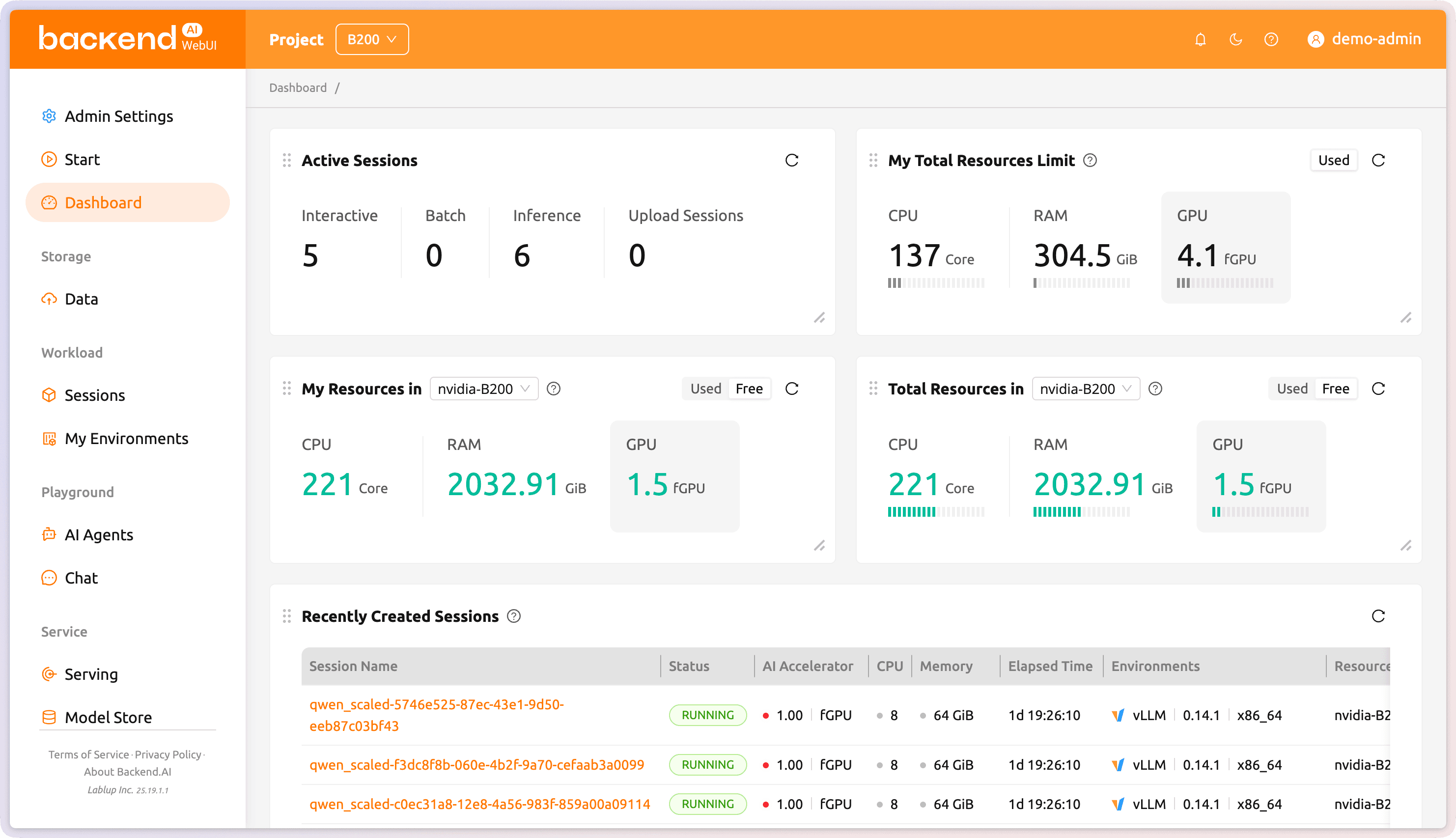

The Upstage consortium conducted Solar Open model training based on Lablup's Backend.AI. Backend.AI is a container-based cluster platform for hosting AI frameworks and high-performance computing workloads. With vendor-neutral characteristics supporting heterogeneous accelerators from various manufacturers including NVIDIA, Intel, and AMD, it allows flexible infrastructure configuration without being locked into a specific hardware ecosystem. We asked the researcher in charge of model training about their experience training large-scale language models in the Backend.AI environment from a user experience perspective.

Q. What was different about Backend.AI compared to platforms you used before?

Sehwan (Upstage) | I've previously used Slurm as a job scheduler on AWS and Oracle Cloud. Slurm operates with GPU equipment always powered on and environments pre-configured, so submitted jobs execute immediately. Backend.AI, on the other hand, creates a new container for each job request, always starting from a clean initial state—a technical implementation difference. Both approaches have pros and cons, but the biggest advantage I found in Backend.AI's container-based approach was environment isolation. Team members each train or evaluate in different environments, and individual container isolation meant absolutely no environment conflicts. Everyone can configure their own independent environment, and my work never affects or is affected by others' work, enabling stable parallel operations.

Kyujin (Lablup) | To elaborate a bit, Backend.AI's experimental environment is similar to creating multiple user accounts on a desktop. Slurm is like multiple users sharing one PC account, where everyone's work can mix without distinction. Backend.AI creates isolated experimental environments per user, operating like a Windows PC where multiple people can freely create and delete files in their own home directories.

Q. After using Backend.AI, which advantages resonated most with you?

Sehwan (Upstage) | The initial onboarding process was really easy. Since I've used Slurm before, I keep comparing—Slurm requires all operations through CLI commands, creating a high initial barrier. Backend.AI, on the other hand, has a well-designed intuitive WebUI, making it easy to explore and immediately utilize new features. GPU utilization monitoring was particularly convenient—I could check real-time usage with just a few clicks. Being able to grasp GPU status at a glance on the dashboard helped us efficiently distribute workloads without idle resources and maximize overall cluster utilization.

Eunjin (Lablup) | Actually, watching Upstage users was quite impressive for me. I gave them about 30 minutes of simple instruction initially, and they used it so well. They even utilized features I hadn't mentioned, and I thought, 'These people are really skilled.'

Sehwan (Upstage) | Functionally, what helped most was Backend.AI FastTrack 3, the MLOps platform that works with Backend.AI. When Backend.AI automatically detects GPU failures and excludes problematic GPUs from the resource pool, Backend.AI FastTrack 3 automatically restarts pre-training tasks, and the resumed pre-training is deployed avoiding the problematic GPU nodes. Once configured, even if failures occur, the system runs automatically without my intervention, so I could sleep comfortably at night, and we significantly reduced idle time due to GPU failures.

4. Solar Open 100B Completed Through Lablup-Upstage-SK Telecom Collaboration

Successfully executing the Sovereign AI Foundation Model project required three companies moving as one: SK Telecom with the hardware, Lablup creating the AI operation environment using that hardware, and Upstage training models in that environment. Throughout the interview, both Lablup and Upstage representatives unanimously stated that today's Solar Open wouldn't exist without SK Telecom's full cooperation. We asked about SK Telecom's role in providing infrastructure to the Upstage consortium on the 'HAEIN' cluster.

Q. How did collaboration among SKT, Lablup, and Upstage work?

Kyujin (Lablup) | Although they're not here today, SK Telecom colleagues made tremendous efforts. While the ultimate goal of failure response is automated recovery without human intervention, none of us had experience—SK Telecom had never built such a massive-scale GPU cluster, we'd never operated on such a cluster, and Upstage had never trained models on such a cluster, so everyone experienced trial and error initially.

But even at late hours like 1 AM on weekends, when failures occurred, SKT immediately notified us, our monitoring system quickly identified failures, and when failures were resolved, SK Telecom promptly confirmed recovery without delay—they really supported our consortium with complete dedication. When we later analyzed failure response times, incidents that initially took over an hour were mostly resolved within 20-30 minutes later on. I think the Sovereign AI Foundation Model project created this precious opportunity for domestic companies to gain experience solving problems at this large scale, which is difficult to encounter otherwise.

Seungyoun (Upstage) | The team including SK Telecom GPUaaS Product Development Team Leader Tae Hyung Kim, Kyungmin Kim, Dongyoung Kim, and their colleagues made incredible efforts. Honestly, without the infrastructure they provided and Lablup managing operations, it would have been quite challenging. At the Sovereign Foundation presentation, we conducted live demos and booth demos on SK Telecom's GPU infrastructure. I remember Leader Kim staying proudly at our booth for quite a while.

Kyujin (Lablup) | An episode from Phase 1 evaluation preparation—the cluster we received was designed purely for training, and we later learned we needed to use the same cluster to provide services to general user evaluators. There were many technical challenges different from configuring infrastructure for pre-training. They actively helped with those aspects too, and we got through without problems. I understand SKT is making various technical preparations for Phase 2 as well. If we do another interview like this, maybe we should invite SK Telecom colleagues to join. (laughs)

5. Lablup and Upstage Heading Toward the Future

Q. Concluding Phase 1, what achievements did Upstage and Lablup gain?

Seungyoun (Upstage) | I see two major achievements. First, proving our technical capabilities by building a good model completely from scratch. Second, walking this path together while creating this model. From partners directly contributing to model development to expansion partners strategizing model dissemination—the fact that everyone achieved this together is what matters most.

Kyujin (Lablup) | We primarily supply infrastructure platforms B2B to customers. From a business perspective, when we sell Backend.AI, customers do their work and we support them from behind to ensure smooth operations. We shouldn't and can't know what customers do with our infrastructure. So we'd never been tightly coupled with business to jointly solve many challenges all the way through model training. Training models using 504 B200 GPUs simultaneously on SK Telecom's Haein cluster was an incredibly fresh experience.

Q. What are Upstage's goals or vision for Phase 2 of the Sovereign AI Foundation Model project?

Seungyoun (Upstage) | We will achieve overwhelming first place. We have a vision to create a larger model with lessons learned from Phase 1, dominating with performance while also offering excellent usability. Supporting Korean, English, and Japanese is a given, and expanding to multimodal—handling not just text but also images and video—will be core to Phase 2. Executing these well and continuing plans through Phase 3 and into next year to create models is our consortium's goal.

We've opened our model license for commercial use. While Solar has a proprietary license, we made it nearly equivalent to the Apache License. We hope it becomes a foundation for creating specialized models, developing your own models referencing Solar Open's structure or technical reports, and contributing to the open-source ecosystem.

Sehwan (Upstage) | During Phase 1, our internal model team and the entire consortium experienced trial and error and learned tremendously. From a practitioner's perspective, we're working to refine processes and many other aspects, applying improvements so we can move faster and further ahead.

Q. What are Lablup's goals or vision for Phase 2 of the Sovereign AI Foundation Model project?

Eunjin (Lablup) | We'll continue doing our best in the same role as Phase 1—supporting Upstage to develop models with maximum available resources. During Phase 1, Upstage actively provided various feedback on Backend.AI and Backend.AI FastTrack. We're incorporating that feedback and actively developing to integrate new features into the core engine for Phase 2.

Kyujin (Lablup) | Among additional Backend.AI features planned for release targeting early March is resource preemption. This sets priorities among running tasks, and when the cluster lacks resources and a higher-priority task is created, it terminates lower-priority tasks and automatically reclaims allocated resources. This allows training strategies that always concentrate GPU resources on high-priority tasks. The Sovereign Foundation Model project operates on extremely tight resource usage plans, making every single GPU precious. In this situation, we plan to increase training efficiency through features that allocate substantial resources to pre-training first while assigning lower priority to relatively less important tasks.

Eunjin (Lablup) | From our product perspective, starting Phase 2, our consortium partners will actively use Backend.AI. While Phase 1 focused on supporting single training cases, in Phase 2 we can more actively improve Backend.AI based on diverse feedback coming from consortium partners.

※ The Solar Open model is commercially usable under a proprietary license similar to the Apache License and is released as open source. The Upstage consortium aims for overwhelming achievements in Phase 2 with further enhanced models and infrastructure, and the close collaboration among Lablup, Upstage, and SKT will continue.

Lablup will continue doing our utmost throughout the entire process of creating AI models reflecting Korea's sovereignty.

This interview was conducted in January 2026.

Interviewees: Eunjin Hwang (Lablup), Kyujin Cho (Lablup), Seungyoun Yi (Upstage), Sehwan Joo (Upstage)

Interviewer, Editor, and Photographer: Jinho Heo